DynamoDB Streams - The Ultimate Guide (w/ Examples)

Written by Rafal Wilinski

Published on May 26th, 2021

Time to 10x your DynamoDB productivity with Dynobase [learn more]

What are DynamoDB Streams? How do they work?

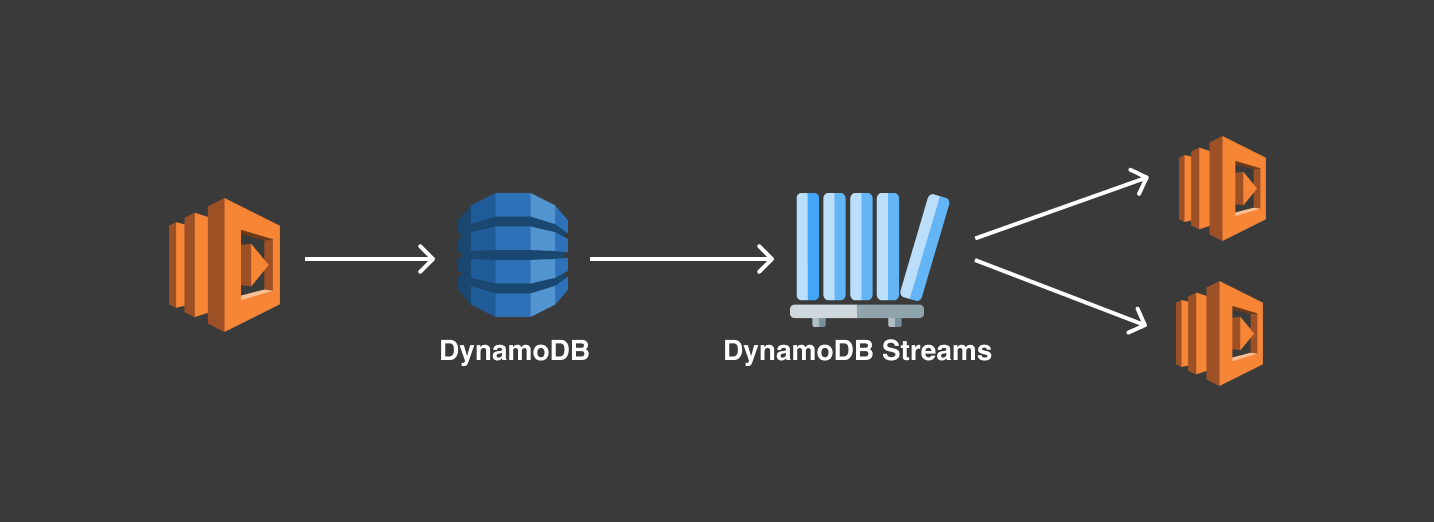

DynamoDB Stream can be described as a stream of observed changes in data, technically called a Change Data Capture (CDC). Once enabled, whenever you perform a write operation to the DynamoDB table, like put, update or delete, a corresponding event containing information like which record was changed and what was changed will be saved to the Stream in near-real time.

Characteristics of DynamoDB Stream

- Events are stored up to 24 hours.

- Ordered, sequence of events in the stream reflects the actual sequence of operations in the table.

- Near-real time, events are available in the stream within less than a second from the moment of the write operation.

- Deduplicated, each modification corresponds to exactly one record within the stream.

- No-op operations, like

PutItemorUpdateItemthat do not change the record are ignored.

Anatomy of DynamoDB Stream

A Stream consists of Shards. Each Shard is a group of Records, where each record corresponds to a single data modification in the table related to that stream.

Shards are automatically created and deleted by AWS. Shards also have the possibility of dividing into multiple shards, and this also happens without our action.

Moreover, when creating a stream you have a few options on what data should be pushed to the stream. Options include:

OLD_IMAGE- Stream records will contain an item before it was modified.NEW_IMAGE- Stream records will contain an item after it was modified.NEW_AND_OLD_IMAGES- Stream records will contain both pre and post-change snapshots.KEYS_ONLY- Stream records will contain only the primary key attributes of the modified item.

DynamoDB Lambda Trigger

DynamoDB Streams work particularly well with AWS Lambda due to its event-driven nature. They scale to the amount of data pushed through the stream and streams are only invoked if there's data that needs to be processed.

In Serverless Framework, to subscribe your Lambda function to a DynamoDB stream, you might use the following syntax:

Sample event that will be delivered to your Lambda function:

Few important points to note about this event:

- The

eventNameproperty can be one of three values:INSERT,MODIFY, orREMOVE. - The

dynamodb.Keysproperty contains the primary key of the record that was modified. - The

dynamodb.NewImageproperty contains the new values of the record that was modified. Keep in mind that this data is in DynamoDB JSON format, not plain JSON. You can use a library likedynamodb-streams-processorby Jeremy Daly to convert it to plain JSON.

Filtering DynamoDB Stream events

One of the recently announced features of Lambda function is the ability to filter events, including those coming from a DynamoDB Stream. Filtering is especially useful if you want to process only a subset of the events in the stream, e.g., only events that are deleting records or updating a specific entity. This is also a great way to reduce the amount of data that your Lambda function processes - it drives the operational burden and costs down.

To create event source mapping with filter criteria using AWS CLI, use the following command:

The filter-criteria argument is using the same syntax as EventBridge event patterns.

DynamoDB Streams Example Use Cases

DynamoDB Streams are great if you want to decouple your application core business logic from effects that should happen afterward. Your base code can be minimal while you can still "plug-in" more Lambda functions reacting to changes as your software evolves. This enables not only separation of concerns but also better security and reduces the impact of possible bugs. Streams can also be leveraged to be an alternative for Transactions if consistency and atomicity are not required.

Data replication

Even though cross-region data replication can be solved with DynamoDB Global tables, you may still want to replicate your data to a DynamoDB table in the same region or push it to RDS or S3. DynamoDB Streams are perfect for that.

Content moderation

DynamoDB Streams are also useful for writing "middlewares". You can easily decouple business logic with asynchronous validation or side-effects. One example of such a case is content moderation. Once a message or image is added to a table, DynamoDB Stream passes that record to the Lambda function, which validates it against AWS Artificial Intelligence services such as AWS Rekognition or AWS Comprehend.

Search

Sometimes the data must also be replicated to other sources, like Elasticsearch where it could be indexed in order to make it searchable. DynamoDB Streams allow that too.

Real-time analytics

DynamoDB Streams can be used to power real-time analytics by streaming data changes to analytics services like Amazon Kinesis or AWS Glue. This allows businesses to gain insights and make data-driven decisions almost instantaneously.

Auditing and compliance

For applications that require strict auditing and compliance, DynamoDB Streams can be used to maintain a detailed log of all changes to the data. This log can be stored in a secure location and analyzed to ensure compliance with regulatory requirements.

Best practices

- Be aware of the eventual consistency of that solution. DynamoDB Stream events are near-real time but not real-time. There will be a small delay between the time of the event and the time of the event delivery.

- Be aware of constraints - events in the stream are retained for 24 hours, only two processes can be reading from a single stream shard at a time.

- To achieve the best separation of concerns, use one Lambda function per DynamoDB Stream. It will help you keep IAM permissions minimal and code as simple as possible.

- Handle failures. Wrap the whole processing logic in a

try/catchclause, store the failed event in a DLQ (Dead Letter Queue), and retry them later. - Monitor and scale. Use CloudWatch to monitor the performance and health of your streams and Lambda functions. Ensure that your architecture can scale to handle peak loads.

Notifications and sending e-mails

Similarly to the previous example, once the message is saved to a DynamoDB table, a Lambda function which subscribes to that stream, invokes AWS Pinpoint or SES to notify recipients about it.

Frequently Asked Questions

How much do DynamoDB streams cost?

DynamoDB Streams are based on "Read Request Units" basis. To learn more about them head to our DynamoDB Pricing calculator.

How can I view DynamoDB stream metrics?

DynamoDB Stream metrics can be viewed in two places:

- In AWS DynamoDB Console in the Metrics tab

- Using AWS Cloudwatch

What are DynamoDB Stream delivery guarantees?

DynamoDB Streams operate in exactly-once delivery mode meaning that for each data modification, only one event will be delivered to your subscribers.

Can I filter DynamoDB Stream events coming to my Lambda function?

Yes, you can use "event source mapping" with a filter criteria to filter events coming to your Lambda function. It's using the same syntax as EventBridge event patterns.