DynamoDB On-Demand Scaling vs Provisioned with Auto-Scaling [The Ultimate Comparison]

Written by Charlie Fish

Published on October 26th, 2021

Time to 10x your DynamoDB productivity with Dynobase [learn more]

Introduction

There are multiple ways to provision your DynamoDB tables. Originally, the only method was manually provisioning the throughput of your tables. However, this method was not very flexible in scaling up and down depending on usage or demand. A sudden spike meant that your table wouldn't have the throughput to handle requests, leading to errors. The only solution was to manually build your own custom solution to call the AWS APIs to scale your tables. For a fully managed database, this created additional work for you.

Fast forward to June 2017, and AWS announced Provisioned with Auto-Scaling. Provisioned with Auto-Scaling allowed you to specify a target utilization, minimum and maximum provisioned capacity, and AWS would work to achieve the target utilization by scaling your table throughput up and down as needed. This was great at handling increases in database load over time; however, extremely rapid spikes in load could still occur faster than the table could scale.

This issue was addressed by AWS in November 2018 at AWS re:Invent 2018 with the introduction of On-Demand Scaling. I like to refer to this as DynamoDB Serverless. With On-Demand Scaling, you don't need to think about provisioning throughput. Instead, your table will scale behind the scenes automatically. Extreme spikes in load can occur and be handled seamlessly by AWS. This also brings a change in the cost structure for DynamoDB. With On-Demand Scaling, instead of paying for provisioned throughput, you pay per read or write request.

Provisioned with Auto-Scaling

How it works

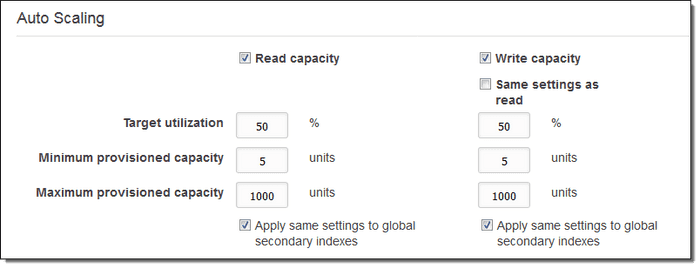

Provisioned with Auto-Scaling takes in a few options for both read capacity and write capacity in order to optimize your table:

- Target utilization - This is a percentage that DynamoDB will aim for your consumed table capacity to be this % of your total table capacity. For example, if you set this to 70%, and you are making 70 requests worth of provisioned capacity, your table will aim to be set to 100 provisioned capacity.

- Minimum provisioned capacity - This is the minimum provisioned capacity that your table will allow. DynamoDB will not scale your table's provisioned capacity below this value.

- Maximum provisioned capacity - This is the maximum provisioned capacity that your table will allow. DynamoDB will not scale your table's provisioned capacity above this value.

These values apply to both the read capacity and the write capacity. You can also choose to set the write capacity to match the read capacity.

Remember, the table capacity acts as horizontal scaling. However, instead of horizontally scaling servers, AWS abstracts the concept of database servers behind the concept of capacity, where you are determining the number of database requests as opposed to database servers. Behind the scenes, the behavior is the same as scaling database servers.

DynamoDB publishes the consumed table capacity to CloudWatch. If the consumed capacity exceeds the policy for a specific length of time, CloudWatch will trigger an alarm. This alarm will invoke the Application Auto Scaling to evaluate the scaling policy and issue an UpdateTable request to DynamoDB to adjust the throughput.

Limitations

There are a few limitations of this system. It is important to understand that some of these limitations are not a result of Auto-Scaling, but limitations with DynamoDB table capacity in general. However, those limitations can occur more frequently when using Auto-Scaling.

First, only 4 decreases to your table's capacity are allowed per day. However, if there was no decrease in the past hour, an additional decrease is allowed. This means for highly variable traffic, you might run into a case where you are paying for a higher capacity than you actually need, which can be inefficient.

Additionally, Auto-Scaling does not prevent you from manually modifying throughput. These manual adjustments don't affect existing alarms related to Auto-Scaling either. Personally, I don't recommend this because it leads to situations where you are fighting with the Auto-Scaling software. But it is good to know that it is possible, and that it doesn't affect the alarms related to Auto-Scaling.

Finally, it is important to note that scaling actions don't happen instantly. It can take a little bit of time for the new scaling capacity to be provisioned and usable. This means if you receive a massive sudden influx of requests, it can take a little bit of time for your capacity to scale up to meet that increased demand. This means Auto-Scaling is best for situations where traffic will scale gradually and not incur sudden spikes of traffic. For most applications, this is fine; traffic normally spikes during the middle of the day and tapers off overnight. But it is important to understand that Auto-Scaling and changes to provisioned capacity are not instantaneous.

On-Demand Scaling

How it works

On-Demand Scaling is an entirely different system for provisioning table capacity. This new system is almost identical to serverless systems like AWS Lambda and AWS API Gateway. The responsibility for thinking about how many servers/capacity to provision is shifted from you to AWS.

This changes the payment structure of how you pay for DynamoDB. Instead of paying for provisioned throughput, you pay per request made to your table. You can use the Dynobase DynamoDB Pricing Calculator to learn more about this.

In order to enable this in JavaScript, you can do something like the following:

The important part here is setting the BillingMode to PAY_PER_REQUEST.

You can also set BillingMode to PAY_PER_REQUEST when running an updateTable command:

Of course, you can also do all of these actions within the AWS Console as well.

When moving to On-Demand Scaling or to Provisioned Capacity, all of your data will stay intact. There is no need to migrate or delete your data. Everything is handled behind the scenes seamlessly for you.

Limitations

Probably the biggest limitation of On-Demand Scaling is the fact that you can only go from Provisioned Capacity to On-Demand Scaling once per day. This is a one-direction limit, so theoretically you can go from On-Demand Scaling to Provisioned Capacity as many times as you'd like.

Additionally, DynamoDB throughput default quotas still apply. By default, this is 40k read request units and 40k write request units. However, you can easily request a service quota increase by contacting AWS support.

Finally, all indexes will use On-Demand Scaling as well. You are unable to mix and match between On-Demand Scaling and Provisioned Capacity for the table and that table's indexes.

Which to use?

Here are a few reasons you might consider Provisioned with Auto-Scaling vs On-Demand Scaling.

| Provisioned with Auto-Scaling | On-Demand Scaling |

|---|---|

| Predictable, consistent traffic | Variable traffic with lots of traffic spikes |

| Predictable cost structure, while also setting limits | Don't want to think about provisioning throughput (just want it to work) |

| Unpredictable/Limited traffic loads (e.g. application in development) |

Remember, for Provisioned with Auto-Scaling, you are basically paying for throughput 24/7. Whereas for On-Demand Scaling, you pay per request. This means for applications still in development or low-traffic applications, it might be more economical to use On-Demand Scaling and not worry about provisioning throughput. However, at scale, this can quickly shift once you have a more consistent usage pattern.

Conclusion

Choosing between Provisioned with Auto-Scaling and On-Demand Scaling depends on your application's specific needs and traffic patterns. If you have predictable, consistent traffic and want a predictable cost structure, Provisioned with Auto-Scaling might be the best choice. On the other hand, if your application experiences variable traffic with sudden spikes or is still in development, On-Demand Scaling offers a more flexible and potentially cost-effective solution. Understanding these options and their limitations will help you make an informed decision that aligns with your operational and financial goals.